How AI Understands Language (Even Though It Doesn’t Actually Understand)

Artificial intelligence can write essays, answer questions, summarize articles, and even hold conversations that feel surprisingly human. But here’s the strange part: AI doesn’t actually understand language the way people do.

Instead, it relies on patterns, probabilities, and predictions.

Let’s take a closer look at how AI processes language and why it sometimes makes mistakes that reveal how it really works.

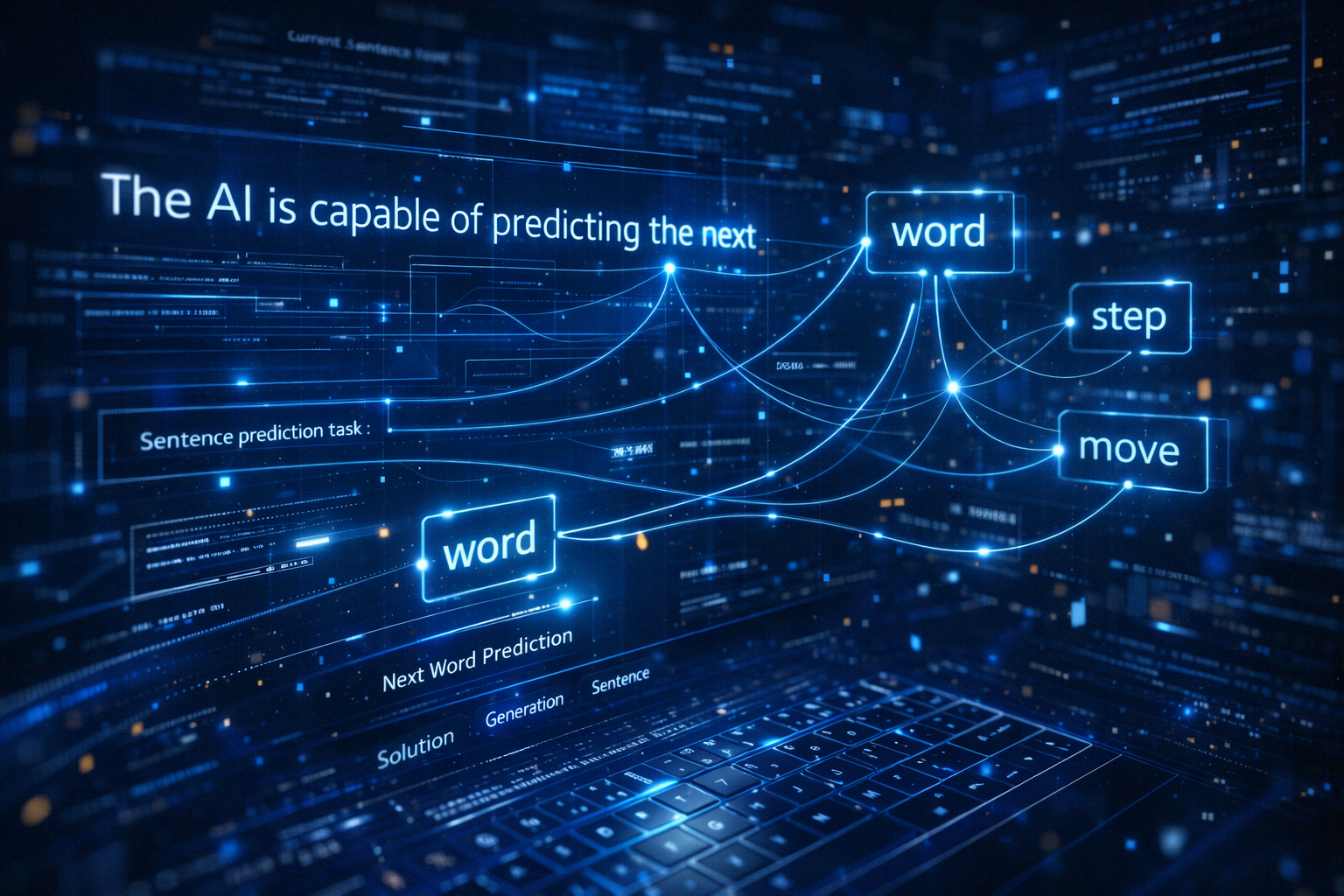

AI Predicts Words Rather Than Understanding Meaning

Most modern AI chat systems are built using large language models (LLMs). These models are trained on enormous datasets that include books, articles, websites, and other text sources.

During training, the AI learns to answer a very specific question repeatedly:

“What word is most likely to come next?”

For example, if the model sees a sentence like:

The sun rises in the _____.

The most probable answer is east because it has seen that pattern many times during training.

AI uses this same prediction method to generate entire paragraphs. Each new word is selected based on probabilities calculated from previous words.

This is why AI can produce very natural-sounding writing—even though it’s technically just predicting text step by step.

The Role of Tokens

AI doesn’t read words the same way humans do. Instead, it breaks text into smaller pieces called tokens.

A token might be:

A whole word

Part of a word

A piece of punctuation

For example, the sentence:

Artificial intelligence is fascinating.

Could be broken into tokens like:

Artificial | intelligence | is | fascinating | .

These tokens are converted into numbers so the AI can process them mathematically.

Once converted into numbers, the AI analyzes relationships between tokens to decide what should come next in a sentence.

Why AI Sounds Confident Even When It’s Wrong

Because AI predicts text based on patterns, it sometimes produces answers that sound correct but are actually inaccurate.

This phenomenon is known as an AI hallucination.

It happens when the model generates information that fits language patterns but was never verified as factual.

For example, if you ask an AI about a rare historical event or a fictional book title, it might invent details that seem believable.

That’s why AI should always be used as a tool for assistance, not as the sole source of truth.

Context Is the Secret to Good AI Responses

One thing AI does very well is track context.

If you ask:

Who was the first person on the moon?

The AI answers:

Neil Armstrong.

But if your next question is:

When did he land there?

The AI understands that “he” refers to Neil Armstrong.

This ability to follow conversational context is what makes modern AI assistants feel more natural to interact with.

However, context can also confuse AI when questions involve sarcasm, jokes, or tricky phrasing.

Why AI Struggles With Wordplay and Trick Questions

Humans rely heavily on intuition and real-world understanding when interpreting language.

AI doesn’t have lived experience. It only has data patterns.

That means certain types of questions can trip it up, especially:

Puns

Riddles

Logical traps

Sarcasm

Ambiguous phrasing

These are areas where humans often outperform AI.

Try to Stump an AI

Now that you know how AI generates language, here’s a fun experiment.

Open a free AI chatbot such as ChatGPT, Gemini, or Claude and try asking it a tricky question.

Start with this one:

If a vegetarian eats vegetables, what does a humanitarian eat?

The correct answer is humans, but many AI systems will try to avoid the joke or give a more serious explanation.

You can also try asking questions involving:

wordplay

logic puzzles

counting letters in words

confusing instructions

Sometimes the AI will get it right.

Sometimes it won’t.

And when it doesn’t, you’ll get a glimpse of how AI really works behind the scenes.

Final Thought

Artificial intelligence may seem like it understands language, but what it’s really doing is predicting patterns in text based on massive amounts of training data.

That prediction ability is powerful enough to generate conversations, articles, and even creative writing.

But it also means AI can still be fooled.

So the next time you talk to an AI assistant, try giving it a puzzle and see if you can stump the machine.